2021/22 — San José State University

— Addressing Quality Online Course Design

2021/22 — San José State University

— Addressing Quality Online Course Design

Proposal Summary: At San José State University, we proposed to provide training along with a faculty-mentor support structure for the faculty participants in the program. We were able to complete the goals of this project through a combination of Quality Matters Workshops, campus-based webinars/recordings, and faculty team leaders. The campus continues to expand our support with guidelines that faculty can use as they develop their online courses. The quality assurance program provides extensive training, faculty expertise, and guidance on the redesign of a course that takes into account best practices as identified in the Quality Matters (QM) rubric. As this academic year was primarly conducted online, this program assisted faculty with the design of those courses.

Campus Goal for Quality Assurance

The EOQA goals are in line with the CSU’s Graduation Initiative 2025 specifically addressing the measure of ensuring effective use of technology is part of every CSU student’s learning environment. Additionally, our approach follows guidelines proposed in SJSU’s Four Pillars of Student Success that promote the development of:

- richer and more readily accessible on-line supplemental study materials;

- more elaborate and interactive homework and self-check instructional materials;

- and more engaging in-class teaching strategies.

This proposal focused on developing a standard with which online and hybrid courses can use as a way to reflect upon their current course design and make the necessary revisions to reflect best practices. There are multiple needs of the campus to support Quality Assurance Efforts.

- 1: Develop materials and resources that faculty can access and use to guide course design.

- 2: Provide professional development opportunities to increase faculty awareness regarding quality assurance.

- 3: Build a group of faculty that can become experts in quality assurance and provide mentoring for new faculty.

Quality Assurance Lead(s)

- Jennifer Redd, Project Facilitator

- Ravisha Mathur, Faculty Quality Assurance Team Leader

Campus Commitment Toward Sustainability of QA Efforts

- Developed multiple Canvas course templates based upon Quality Matters Principles

- Encourage quality assurance principles in instructional design consultations

Summary of Previous QA Accomplishments

This quality assurance program was the ninth iteration on campus. The previous cohort participated during the 2020-21 academic year. A variety of quality assurance efforts continue to expand on campus.

- Effort 1: A faculty cohort completed one or two Quality Matters trainings: Applying the Quality Matters Rubric and Improving Your Online Course. A previous cohort completed the Peer Reviewer Course. Additional information about last year's effort can be found in the Quality Assurance ePortfolio.

- Effort 2: Increase awareness through outreach activities. This includes posting resources on the eCampus website and through participation in informational webinars. It also includes promoting workshops and encouraging attendance through flyers and presentations at campus events.

- Effort 3: The rubric is provided as a resource for faculty in a password-protected Canvas course. Also, it serves as a guide when instructional designers consult with faculty members on course design.

- Effort 4: Encourage faculty and staff that have completed Peer Reviewer Training to become a Quality Matters Peer Reviewer.

- Effort 5: A Canvas course template that adheres to Quality Matters Standards is available to all faculty.

Course Peer Review and Course Certifications

- Peer Review: Faculty members were assigned a partner. Using a rubric, they provided a peer review for each other's course.

- Faculty Lead Review: A faculty leader provided each faculty member with an informal course review.

Faculty Participants

| Name | Course Number | Course Name |

|---|---|---|

| San-hui Chuang |

CHIN 25B | Intermediate Chinese |

| Nisha Garud Patkar |

JOUR 61 | Writing for Print, Electronic and Online Media |

| Tabitha Hart | COMM 285A | Teaching Associate Practicum I |

Accessibility/UDL Efforts

- One of the recordings during the yearlong program focused on accessibility. This included document preparation, presentations, and LMS features.

- One of the recordings during the year-long program focused on Universal Design for Learning. This included tips and examples.

- Workshops throughout the year that introduce faculty to new teaching methods as well as to the LMS includes time for discussions regarding developing accessible instructional materials.

Feedback was gathered following each of the webinars that were part of the year-long cohort. Participants answered two questions in the Discussions section of the course.

- Did the ________ recording help you to identify something you would like to include, change, or develop in your course?

- Share 1 question or comment regarding the ________ webinar.

Next Steps for QA Efforts

- Expand the number of courses that are Quality Matters certified

- Provide guidance to faculty interested in having their courses certified

- Encourage faculty participation in Quality Matters Trainings

Training Completions

The following table summarizes all of the SJSU faculty and staff Quality Assurance training completions that occurred during the 2021/22 academic year.

| Training | Number of Completions |

|---|---|

| Advanced QLT Course in Teaching Online |

10 |

Applying the Quality Matters Rubric (APPQMR) |

2 |

| Designing Your Online Course (DYOC) |

1 |

| Improving Your Online Course (IYOC) |

6 |

| Introduction to Teaching Online Using the QLT Instrument (QLT1) |

2 |

| Assessing Your Learners (AYL) |

1 |

| Connecting Learning Theories to Your Teaching Strategies (CLTTS) |

1 |

| Creating Presence in Your Online Course (CPOC) |

1 |

| Designing Your Blended Course (DYBC) |

1 |

| Evaluating Your Course Design (EYCD) |

1 |

| Orienting Your Online Learners (OYOL) |

1 |

| Gauging Your Technology Skills (GYTS) |

1 |

| Accelerated K-12 Reviewer Course for Higher Education (AK12RHE), Fifth Edition |

1 |

| Designing Your Online Course (DYOC) |

1 |

The CSU QA Student Online Course Survey was distributed via Qualtrics to the classes taught by the two of the three 2021-2022 EOQA participants. The third participant used the training to update her summer 2022 class and therefore did not distribute the survey to the students she taught during the 2021-2022 academic year. The survey was completed by 6 students in 2 lower division courses. Three respondents were female, one was male, and two identified as “other.” There were one freshman, three sophomores, and two juniors. Five out of six respondents had previously taken at least one online course. Four respondents were Asian, one was Hispanic or Latino, and one was Caucasian.

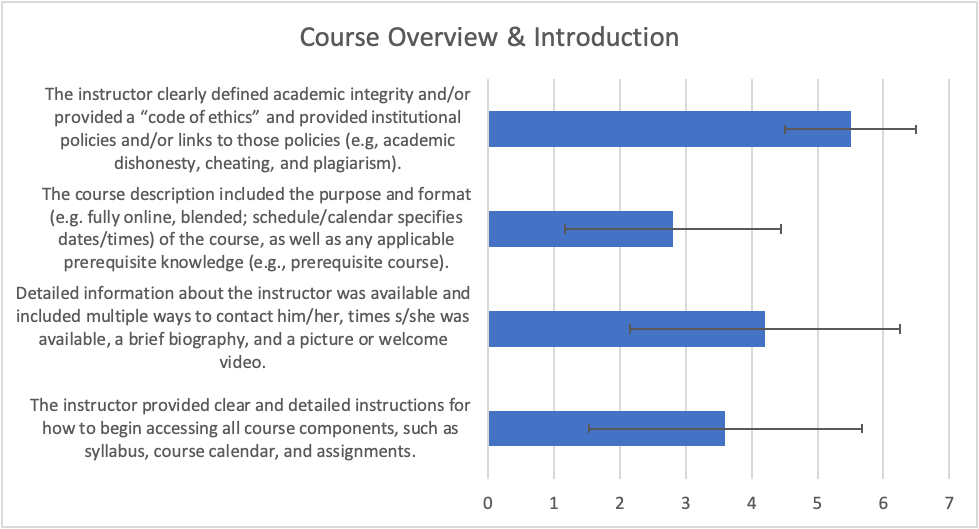

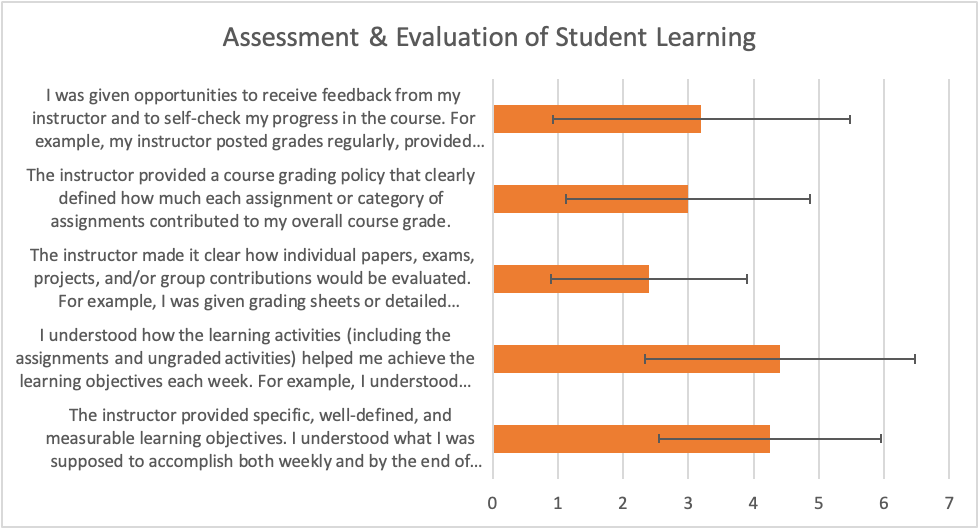

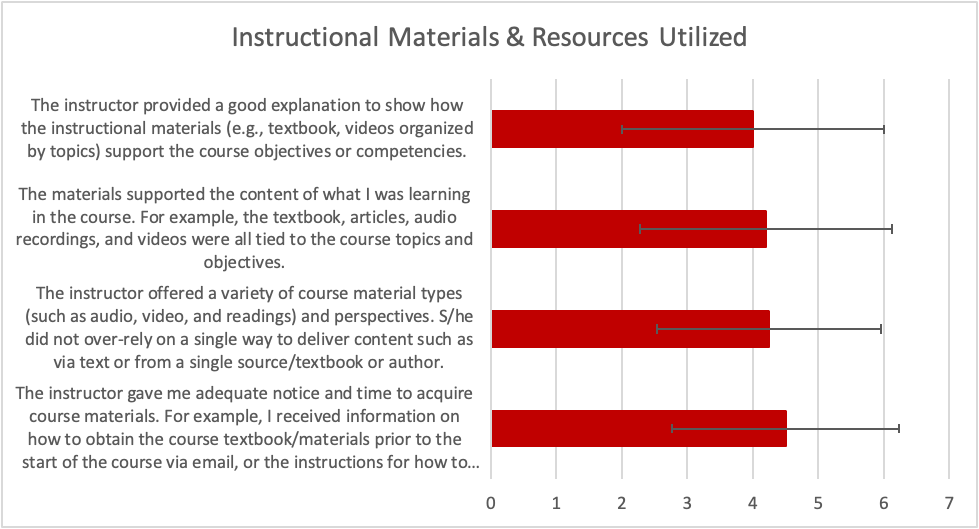

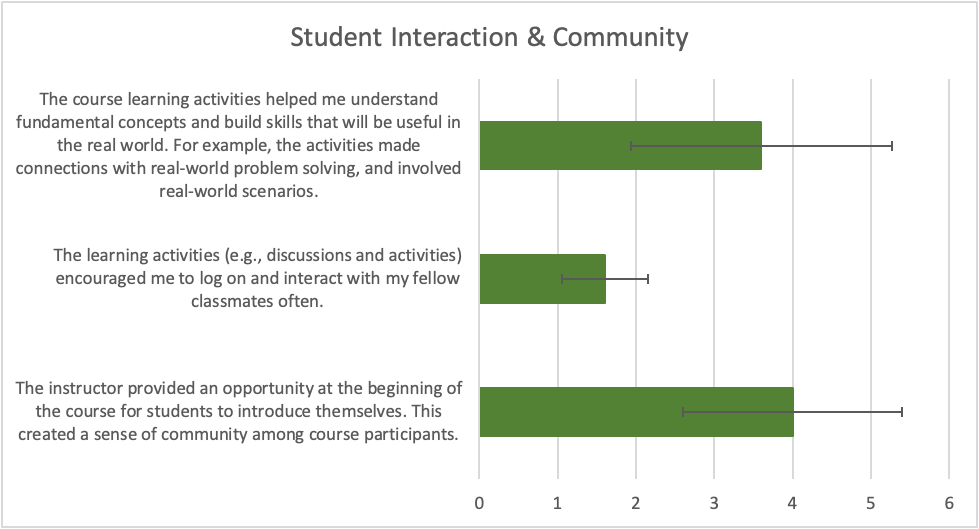

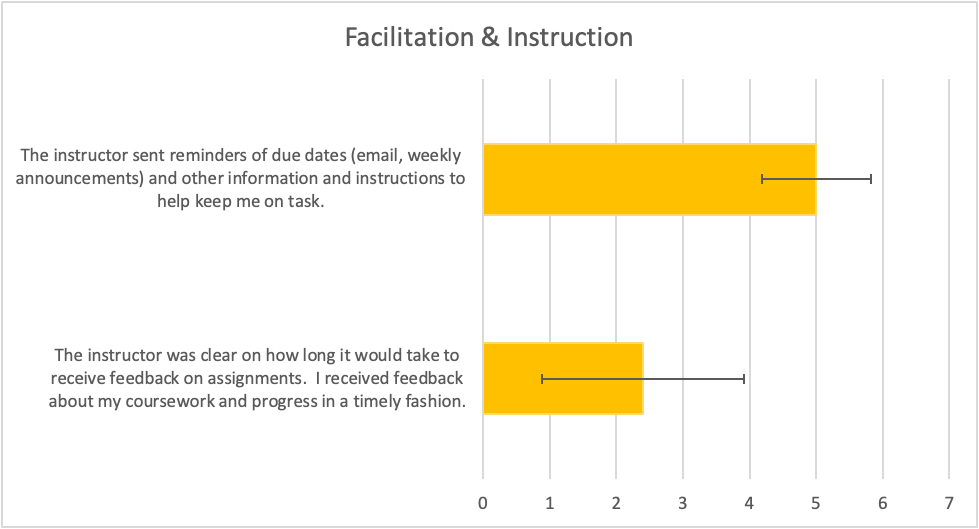

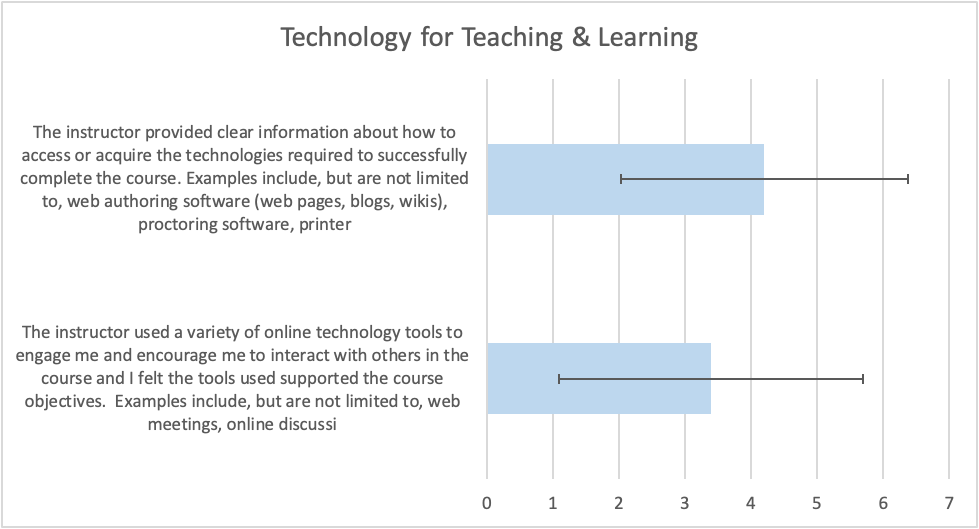

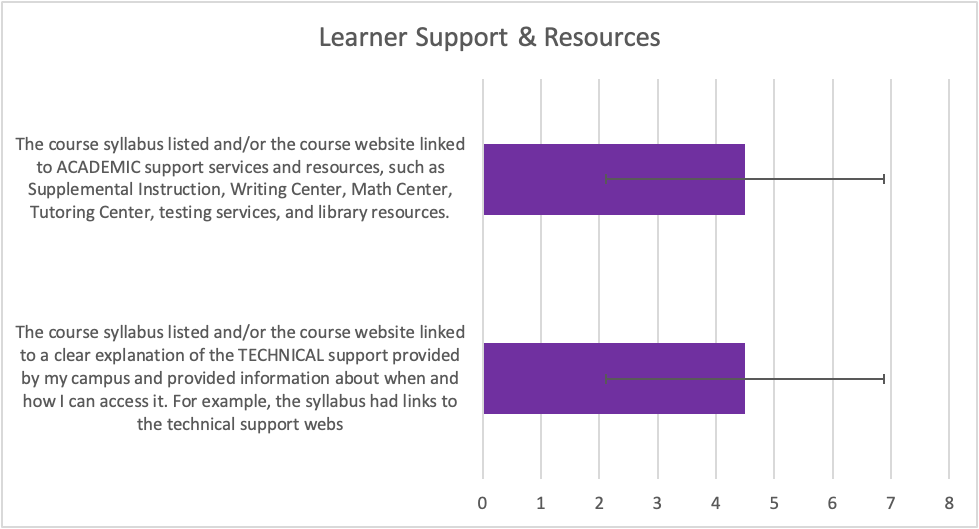

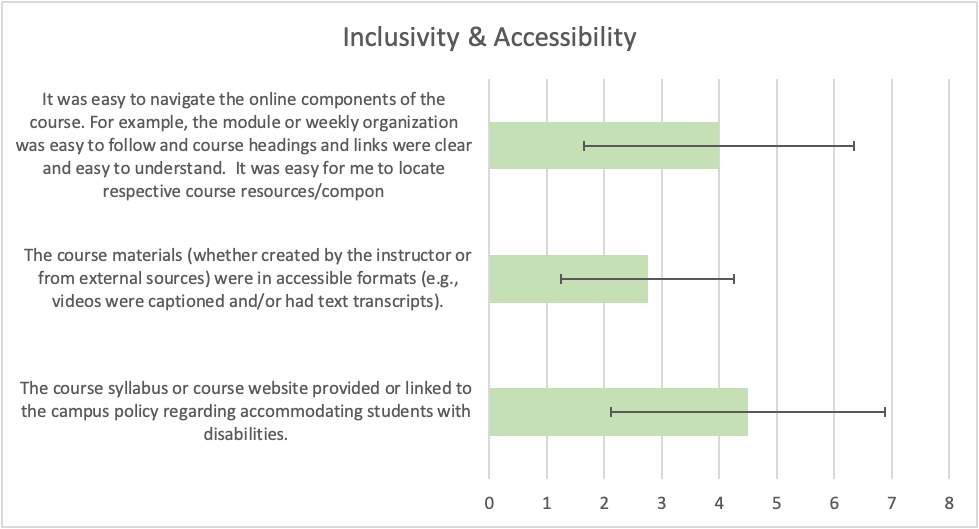

In addition to the questions pertaining to course details and student demographics, there were 25 questions asking students to rate their agreement with a statement on a six-point scale from Strongly Disagree (1) to Strongly Agree (6). There were 4 questions pertaining to Course Overview and Introduction, 5 questions pertaining to Assessment and Evaluation of Student Learning, 4 questions addressing Instructional Materials and Resources Utilized, 3 questions addressing Student Interaction and Community, 2 questions pertaining to Facilitation and Instruction, 2 questions pertaining to Technology for Teaching and Learning, 2 questions addressing Learner Support and Resources, and 3 questions addressing Inclusivity and Accessibility.

Descriptive statistics are presented in the Table. The sample size was extremely low and there was one respondent who consistently provided low ratings (1, strongly disagree) and another who responded “no opinion” to all of the questions. Due to the small sample size, these responses affect the quality and reliability of the data, and the former respondent greatly reduces the means and increases the standard deviations compared to previous years. This respondent was clearly dissatisfied with the course. The sample size for each question therefore was 4 or 5, depending on whether there were additional “no opinion” responses to a question. Medians were also calculated and some were higher than the mean while others were lower than the mean. It would have been desirable to have a larger sample size but despite our attempts this is the largest sample size we were able to obtain. It was encouraging that the maximum response was Strongly Agree (6) on most questions.

The average responses for the Course Introduction and Overview questions ranged from 2.80 to 5.50 (Figure 1). The lowest average pertained to whether the course description included the purpose and format of the course and any applicable prerequisite knowledge.

The average responses for the Assessment and Evaluation of Student Learning questions ranged from 2.40 to 4.40 (Figure 2). The question with the lowest maximum response of 4 pertained to the instructor making it clear how work would be evaluated.

Figure 1: Mean responses to questions about course overview and introduction. In all graphs, error bars depict the standard deviation.

Figure 1: Mean responses to questions about course overview and introduction. In all graphs, error bars depict the standard deviation.

Figure 2: Mean responses to questions about assessment and evaluation of student learning.

Figure 2: Mean responses to questions about assessment and evaluation of student learning.For the questions addressing Instructional Materials and Resources Utilized, the average responses ranged from 4.00 to 4.50 (Figure 3). For the questions addressing student opinions on Student Interaction and Community, the averages ranged from 1.60 to 4.00 (Figure 4). The lowest mean pertained to whether the learning activities encouraged the student to logon and interact with classmates.

Figure 3: Mean responses to questions about instructional materials and resources utilized.

Figure 3: Mean responses to questions about instructional materials and resources utilized.

Figure 4: Mean responses to questions about student interaction and community.

Figure 4: Mean responses to questions about student interaction and community.

There were two questions on Facilitation and Instruction (Figure 5). The average responses were 5.00 and 2.40. The question with the lower mean and lower maximum response (4) pertained to timeliness of feedback on work. The average ratings for the two questions pertaining to Technology for Teaching and Learning were 3.40 for the use of a variety of technology tools to engage the class and encourage them to interact, and 4.20 for providing clear information on how to access/acquire the required technologies (Figure 6).

Figure 5: Mean responses to questions about facilitation and instruction.

Figure 5: Mean responses to questions about facilitation and instruction.

Figure 6: Mean responses to questions about technology for teaching and learning.

Figure 6: Mean responses to questions about technology for teaching and learning.

The average response was 4.50 for each of the questions on Learner Support and Resources (Figure 7).

Figure 7: Mean responses to questions about learner support and resources.

Figure 7: Mean responses to questions about learner support and resources.

Finally, average ratings for the Inclusivity and Accessibility items ranged from 2.75 to 4.50 (Figure 8). The question with the lowest mean and lowest maximum response (4) pertained to accessible format for course materials.

Figure 8: Mean responses to questions about inclusivity and accessibility.

Figure 8: Mean responses to questions about inclusivity and accessibility.

Table. Descriptive statistics for the 25 items in the Student Impact Survey completed in AY 2021-2022, n = 6

Question |

Minimum |

Maximum |

Mean |

Std Deviation |

Course Overview and Introduction |

||||

The instructor provided clear and detailed instructions for how to begin accessing all course components, such as syllabus, course calendar, and assignments. |

1 |

6 |

3.60 |

2.074 |

Detailed information about the instructor was available and included multiple ways to contact him/her, times s/she was available, a brief biography, and a picture or welcome video. |

1 |

6 |

4.20 |

2.049 |

The course description included the purpose and format (e.g. fully online, blended; schedule/calendar specifies dates/times) of the course, as well as any applicable prerequisite knowledge (e.g., prerequisite course). |

1 |

5 |

2.80 |

1.643 |

The instructor clearly defined academic integrity and/or provided a “code of ethics” and provided institutional policies and/or links to those policies (e.g, academic dishonesty, cheating, and plagiarism). |

4 |

6 |

5.50 |

1.000 |

Assessment and Evaluation of Student Learning |

||||

The instructor provided specific, well-defined, and measurable learning objectives. I understood what I was supposed to accomplish both weekly and by the end of the course. For example, each week there were specific learning goals and I knew exactly what I was supposed to learn/accomplish (e.g., there were bulleted list of activities to complete each week). |

2 |

6 |

4.25 |

1.708 |

I understood how the learning activities (including the assignments and ungraded activities) helped me achieve the learning objectives each week. For example, I understood how a discussion forum could help me prepare to develop a “reaction paper” on a topic. |

1 |

6 |

4.40 |

2.074 |

The instructor made it clear how individual papers, exams, projects, and/or group contributions would be evaluated. For example, I was given grading sheets or detailed descriptions of how points were distributed for major assignments. |

1 |

4 |

2.40 |

1.517 |

The instructor provided a course grading policy that clearly defined how much each assignment or category of assignments contributed to my overall course grade. |

1 |

5 |

3.00 |

1.871 |

I was given opportunities to receive feedback from my instructor and to self-check my progress in the course. For example, my instructor posted grades regularly, provided comments on my work, had us self-grade assignments, allowed us to submit drafts of projects for comments, and offered discussion forums for feedback and practice tests. |

1 |

6 |

3.20 |

2.280 |

Instructional Materials and Resources Utilized |

||||

The instructor gave me adequate notice and time to acquire course materials. For example, I received information on how to obtain the course textbook/materials prior to the start of the course via email, or the instructions for how to acquire the materials were in the syllabus or elsewhere in the course. |

2 |

6 |

4.50 |

1.732 |

The instructor offered a variety of course material types (such as audio, video, and readings) and perspectives. S/he did not over-rely on a single way to deliver content such as via text or from a single source/textbook or author. |

2 |

6 |

4.25 |

1.708 |

The materials supported the content of what I was learning in the course. For example, the textbook, articles, audio recordings, and videos were all tied to the course topics and objectives. |

1 |

6 |

4.20 |

1.924 |

The instructor provided a good explanation to show how the instructional materials (e.g., textbook, videos organized by topics) support the course objectives or competencies. |

1 |

6 |

4.00 |

2.000 |

Student Interaction and Community |

||||

The instructor provided an opportunity at the beginning of the course for students to introduce themselves. This created a sense of community among course participants. |

2 |

5 |

4.00 |

1.414 |

The learning activities (e.g., discussions and activities) encouraged me to log on and interact with my fellow classmates often. |

1 |

2 |

1.60 |

0.548 |

The course learning activities helped me understand fundamental concepts and build skills that will be useful in the real world. For example, the activities made connections with real-world problem solving, and involved real-world scenarios. |

1 |

5 |

3.60 |

1.673 |

Facilitation and Instruction |

||||

The instructor was clear on how long it would take to receive feedback on assignments. I received feedback about my coursework and progress in a timely fashion. |

1 |

4 |

2.40 |

1.517 |

The instructor sent reminders of due dates (email, weekly announcements) and other information and instructions to help keep me on task. |

4 |

6 |

5.00 |

0.817 |

Technology for Teaching and Learning |

||||

The instructor used a variety of online technology tools to engage me and encourage me to interact with others in the course and I felt the tools used supported the course objectives. Examples include, but are not limited to, web meetings, online discussions (e.g., VoiceThread), online collaboration tools (e.g., Google Docs), social media tools (e.g., Twitter). |

1 |

6 |

3.40 |

2.302 |

The instructor provided clear information about how to access or acquire the technologies required to successfully complete the course. Examples include, but are not limited to, web authoring software (web pages, blogs, wikis), proctoring software, printers, scanners, browser plug-ins or media players. |

1 |

6 |

4.20 |

2.168 |

Learner Support and Resource |

||||

The course syllabus listed and/or the course website linked to a clear explanation of the TECHNICAL support provided by my campus and provided information about when and how I can access it. For example, the syllabus had links to the technical support website, Help Desk contacts, and online tutorials. |

1 |

6 |

4.50 |

2.380 |

The course syllabus listed and/or the course website linked to ACADEMIC support services and resources, such as Supplemental Instruction, Writing Center, Math Center, Tutoring Center, testing services, and library resources. |

1 |

6 |

4.50 |

2.380 |

Inclusivity and Accessibility |

||||

The course syllabus or course website provided or linked to the campus policy regarding accommodating students with disabilities. |

1 |

6 |

4.50 |

2.380 |

The course materials (whether created by the instructor or from external sources) were in accessible formats (e.g., videos were captioned and/or had text transcripts). |

1 |

4 |

2.75 |

1.500 |

It was easy to navigate the online components of the course. For example, the module or weekly organization was easy to follow and course headings and links were clear and easy to understand. It was easy for me to locate respective course resources/components. |

1 |

6 |

4.00 |

2.345 |

Two of the three faculty participants in the 2021-2022 EoQA program were interviewed upon completion of the program. They were asked about the changes they had made to their courses or planned to make based on their EoQA training and specific components of the EoQA program. Participants were enthusiastic about what they had learned in the program and about the positive changes they were making to their courses as a result of the EoQA training. Some changes include structure, pages, modules, content, consistency, converting points to percentages, adding CLOs for each assignment, revising CLOs to use active verbs, best practices, and ensuring all interactions are occurring (e.g., student-teacher interaction).

In terms of specific webinar topics, the UDL/DI webinar was useful for one participant, who will be giving students choices of modules to complete in a course, and will incorporate more variety into assignments.

The Equity and Accessibility webinar was not as useful; one participant already had learned this information and knows that links should be updated each semester, and the other felt that more training on the technical skills needed to implement the required features would be helpful.

The Lecture Capture/Storytelling webinar was too advanced for one participant, who suggested that hands-on training, practice, and feedback would be very beneficial. The other participant doesn’t have a need for lecture capture in synchronous classes.

The Copyright webinar was useful and it would be helpful to have a handout or checklist to use each semester. This is a good suggestion that would help all faculty on campus. If there is a handout online, perhaps the link could be disseminated in the CFD emails.

The facilitators were responsive and accessible; their answers were helpful and elaborate but sometimes they were slower to grade or respond. They understood that everyone is overwhelmed at SJSU these days. Peer review was time-consuming but worthwhile.

Participants would highly recommend the program to their colleagues, and it is a great way to connect with peers in other departments. It is helpful to understand the student perspective. The online training is convenient for those who don’t want to go to campus if they don’t have to, and this was very much appreciated by one participant.

There weren’t any suggestions for major changes to the program.